Career Advice

Freelance guidance, interview tips, and media career advice to help you move forward.

Latest Articles

Media Sales and Revenue Roles Are Heating Up This June

Local Media and Public Radio Jobs Hiring Now in June 2026

Storytelling Roles Dominate Today’s Media Job Market

Mission-Driven Media Jobs Hiring Now: Comms, Design, and Writing Roles

MediaBistro Weekly Drop: Ghosting the Croisette Edition

Design Journalism and Mission-Driven Comms Roles Hiring Now

Media Startups and Niche Brands Are Hiring Storytellers Today

International Media and Health Content Roles Hiring Now

PMI’s CMO on Why Membership Marketing Looks a Lot Like Media

Stories, ideas, and perspectives to spark your next creative move. Be Inspired features profiles, essays, and thought pieces that celebrate the work of media and creative professionals and remind you why you got into the industry in the first place.

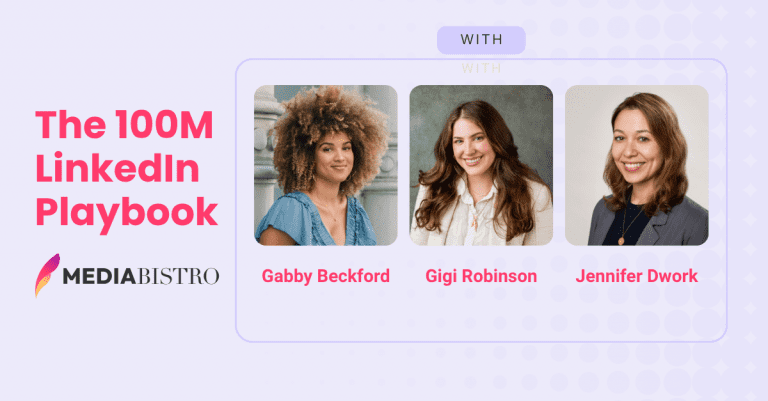

One Creator With 34,000 Followers Generated 100 Million LinkedIn Impressions Last Year. Most Media Companies Can’t Come Close.

How to Transition From Editorial to a Social Media Career

7 Surprising Things You Didn’t Know About Unions in Media

5 Signs It’s Time to Start Looking for a Different Job

SEO Specialist, Expert, and Consultant: What These Positions Do and How to Get Hired

5 Bad Writing Habits That Hurt Your Career (And How to Fix Them)

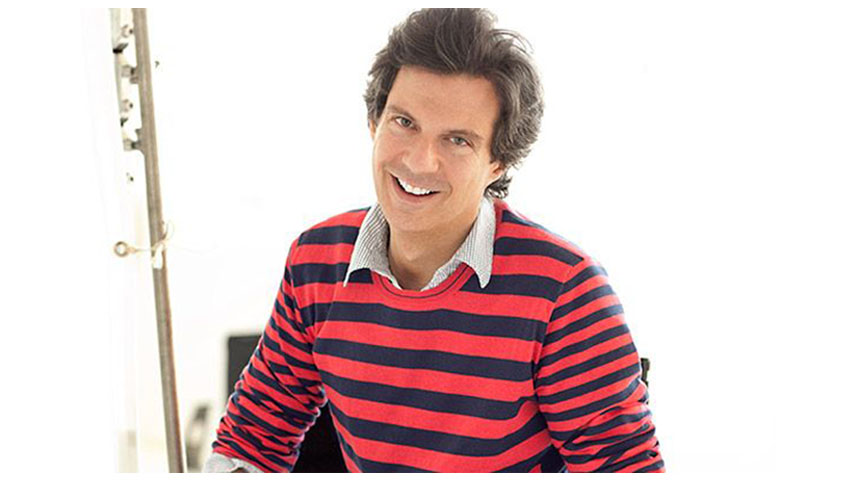

Adam Glassman Interview: What It’s Like Being Creative Director at O, The Oprah Magazine

Resources and guidance for job seekers in media, marketing, and creative industries. From crafting your personal brand to navigating the hiring process, Candidates gives you the tools and insights to position yourself as the standout choice.

How to Withdraw a Job Application: Email Templates & Examples for Every Situation

How to Write a Thank-You Email After an Interview (With Copy-Paste Templates)

How to Reschedule a Job Interview Professionally (With Email Templates)

5 Skills You Need to Work at a Startup

How to Send References in an Email: Templates & Examples for Every Step

Thank You Email After a Bad Interview: How to Recover (With Templates)

How to Choose Job References (Yes, You Can Use Coworkers)

News and developments at the intersection of media careers and education. From journalism school updates and industry training programs to workforce trends and skills in demand, Careers & Education keeps you informed on what's shaping the next generation of media professionals.

Will My Job Still Exist in 10 Years?

Why The Mediabistro Weekly Drop Is the Must-Read Media Careers Newsletter

5 Best AI & Tech Courses for Writers to Future-Proof Their Career (2026)

Best private colleges in America

States with the highest SAT scores

Best big college towns in America

‘Failing our kids’: Philadelphia’s struggle highlights how young learners nationwide miss out on legally mandated support services

Practical strategies for advancing your media or creative career. Climb the Ladder delivers tips on getting promoted, negotiating raises, building your professional network, and making smart moves that take you to the next level.

What Does a Copywriter Do? Responsibilities, Skills & Career Guide

How to Network Like a Boss

Are Cover Letters Still Necessary? What Hiring Managers Really Think

How to Follow Up After a Job Interview (With Example Emails)

Marketing Professional Associations: The Best Organizations to Join

What Does an Account Manager Do? Skills, Salary & How to Break In

What Does an Event Planner Do? Responsibilities, Skills, Salary & Career Path

News and insights from the entertainment industry. From film and television to streaming, music, and gaming, our entertainment coverage tracks the deals, talent moves, and business developments that matter to media and creative professionals.

How 2026 AMA Artist of the Year nominees rank by platform data

The salary you need to live comfortably in 100 US cities

Which Eurovision 2026 tracks are leading on momentum and platform data

The 8 fastest-growing outdoor and mobile businesses this summer

Award season prep: The hidden beauty treatments powering red carpet and on-screen looks

Noah Wyle Visited a Real Pittsburgh Clinic Before Naming It on The Pitt. The Real Cases Are Just as Dramatic.

Why Justin Bieber’s Coachella Duet With His Younger Self Struck a Nerve

Your roadmap to landing a role in media. Get a Media Job covers the full journey — from identifying the right opportunities and tailoring your application to interviewing well and negotiating your offer in journalism, publishing, marketing, and beyond.

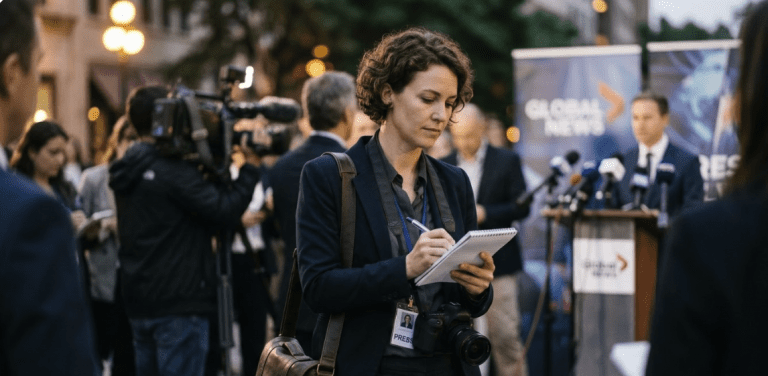

Employment Opportunities in Journalism: Where the Jobs Are Right Now

How to Use Social Media Marketing to Land Your Next Creative Job

Bookkeeping 101: A Freelancer’s Guide to Better Business Finances

Journalism Jobs in 2026: Where the Work Is and How to Get It

Goodbye Email When Leaving a Job: 7 Rules, a Template & What NOT to Say

How To Network in Any Situation

What Journalists Should Know Before Switching to PR

Everything you need to land your next media or creative role. Get Hired covers resume writing, interview prep, portfolio tips, job search strategies, and insider advice to help you stand out and get the offer.

4 Essential Steps to Repair Your Online Reputation

How to Find a Job Before It’s Posted

TV News Jobs: A Field Guide to Every Role in the Newsroom

Write a Cover Letter for a Job You Want Without Sounding Desperate

Should You Hire a Professional Resume Writer? Here’s How to Decide

Reasons You Need a Professional Resume Writer

Why You Need an Updated Resume — Even When You’re Not Job Hunting

Your guide to building a successful freelance career in media and creative industries. Go Freelance covers finding clients, setting rates, managing your business, and navigating the unique challenges of working for yourself.

6 Ways to Track Down a Magazine Editor’s Email for Your Pitch

Five Signals It’s Time to Leave Freelancing (Even If You Don’t Want To)

Bookkeeping 101: A Freelancer’s Guide to Better Business Finances

Freelance Writing Jobs: Where to Find Work & How to Get Started in 2026

How to Get Your First Magazine Feature Story Accepted

Hed, Dek, Lede & More: 10 Journalism Terms Every Freelance Writer Should Know

6 Reasons a Journalism Degree Is Still Necessary

Conversations with the people shaping media and creative industries. Our interviews feature editors, executives, creators, and professionals sharing their career paths, insights on the industry, and advice for those looking to follow in their footsteps.

How Complex’s SVP of Brand Partnerships Turned Cultural Moments Into Commerce

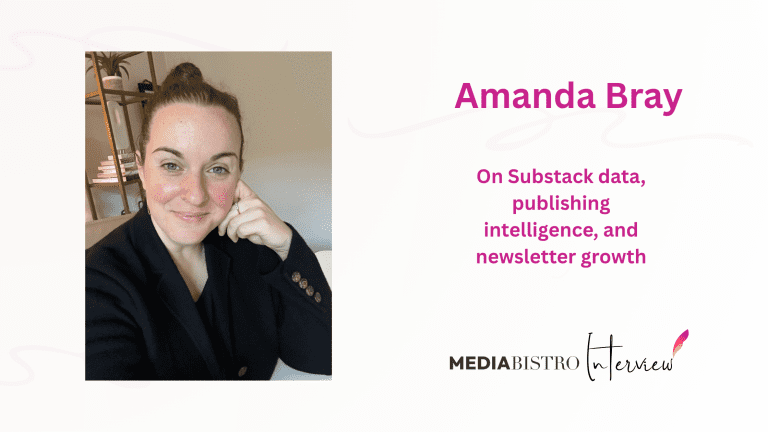

Amanda Bray Wants Substack Creators to Stop Flying Blind

Monica Elias Has Spent Nearly 30 Years Thinking Like a Producer. Here’s What She Learned.

So What Do You Do, Frank Warren, Founder, PostSecret Project?

So What Do You Do, Isaac Mizrahi, Fashion Icon and Creative Director?

Harvey Levin: Founder and TMZ Host. Interview with the Celebrity News Pioneer

So What Do You Do, Nicholas Sparks, Bestselling Romance Novelist?

Explore the latest from Los Angeles, the epicenter of entertainment and media. From Hollywood studio deals and streaming wars to agency moves and shifts in the creative job market, our LA coverage keeps media and entertainment professionals informed about the stories shaping the industry.

Hottest Mays in California since 1895

Coldest Mays in California since 1895

See how much it costs to attend San Diego Padres games

See how much it costs to attend San Francisco Giants games

See how much it costs to attend Los Angeles Angels games

See how much it costs to attend Los Angeles Dodgers games

So What Do You Do, Ted Genoways, Editor, Virginia Quarterly Review?

Sia Michel on Her First Year Running Spin and What Comes Next

Joyce Rutter Kaye on Print Magazine’s Third Ellie Nod and Losing Designers to the Web

Heather Cocks and Jessica Morgan on Parlaying a Fashion-Snark Blog Into a Book Deal

Eileen Gittins on Disrupting Traditional Publishing and Crafting a Viable Business in Self-Publishing

J-School Confidential: How One College Junior Charted His Own Path Without Journalism School

Do Campus Papers Still Matter? A Journalism Student Takes Stock

Media and creative industry news from the heart of New York City. From publishing and advertising to digital media and beyond, our NYC coverage tracks the deals, moves, and trends shaping one of the world's most influential media markets.

Hottest Mays in New York since 1895

Coldest Mays in New York since 1895

See how much it costs to attend New York Mets games

Costs of long-term care and senior living in New York

Best draft picks in New York Giants history

Best draft picks in New York Jets history

Mediabistro Week Drop: Trend Cycle Edition

Mediabistro Weekly Drop: Good Vibes Only Edition

Mediabistro Weekly Drop: The Writers Block Edition

Washington Post Cuts 100 Jobs as Publishing’s 40% Decline Continues

The New Corporate Superpower Is Storytelling. Yes, Really.

Mediabistro Weekly Drop: Labor Pains Edition